Kubernetes is an open-source orchestration tool that automates the deployment of distributed microservices as one or more Linux containers (such as Docker containers). Kubernetes enables you to define your application declaratively from a central location. This standard allows Kubernetes to deploy your whole application in a distributed environment and handle complex use cases like replication. But where did Kubernetes originate? It was developed at Google, one of the early contributors and adopters of Linux containerization. Their internal Linux container orchestration project was dubbed Borg (a Star Trek allusion).

The present Kubernetes technology is based on Borg’s lessons learned. Google open-sourced the project and later donated to the Cloud Native Computing Foundation. Kubernetes is also known as k8s (the letter k, eight letters, and the letter s), “kube,” and “kubes” (pronounced “coob” and “coobs”). With over 50,000 changes in the last three years, it is one of GitHub’s most popular open-source projects. Many enterprises worldwide are actively deploying it in both development and production.

Why Should You Use Kubernetes?

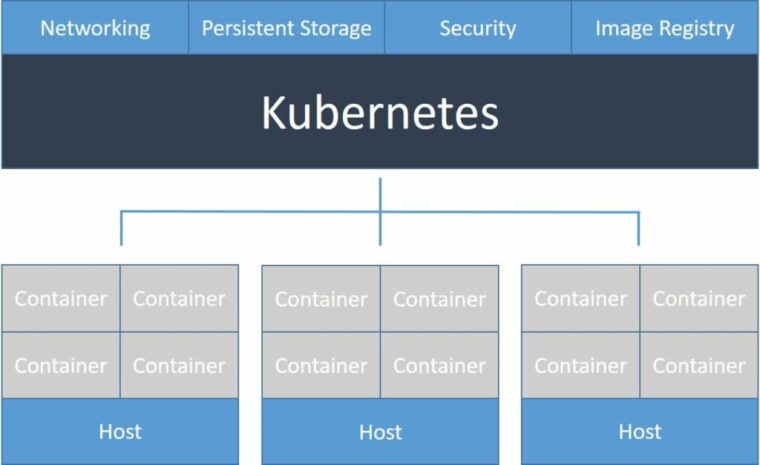

In brief, software deployments are gradually shifting away from monolithic legacy applications and toward microservices. Deploying old applications is simple: install the application on a virtual machine, then install that virtual machine on several physical systems. Microservices deployment is substantially more difficult. It takes work to set up the necessary networking configuration to ensure that all application components can communicate. Load balancing your front-end for high availability mode takes nearly as much engineering as developing your application. What about storage and security? And what tools should you use to complete all of these tasks? Kubernetes drastically simplifies and abstracts this extra complexity, allowing your developers to focus on developing excellent products rather than finding out how to deploy them.

There are several advantages to deploying your application as a microservice. They include, without going into too many technical specifics, increased stability and dependability, lower overhead costs, a better user experience, and natural interaction with continuous integration and continuous deployment systems. All of these functionalities are available with Kubernetes.

What You Get Out Of The Box

Kubernetes is a highly effective orchestration tool. It allows you to run your containerized microservice application in a fully distributed environment. It is ideal for transitioning from monolithic applications to distributed microservices. Kubernetes makes this possible.

- Distribute your application throughout your distributed cluster by orchestrating it.

- Scaling and load balancing are used to create very durable applications.

- Make your deployments, updates, and rollbacks more automated.

- Control persistent storage.

- Examine the state of your application.

Kubernetes also has the following functionalities.

Automatic Binpacking – Determines the best container:host mapping based on resource needs (such as CPU) and other limitations to ensure that each container operates as efficiently as possible.

Self-healing – Kubernetes will restart failed containers and reschedule all containers if a node fails, optimizing application uptime.

Horizontal Scaling – Scale your application horizontally across your distributed cluster using the command line, the user interface, or automatically based on resource utilization.

Service Discovery And Load Balancing – Each container in Kubernetes has its IP address. Multiple instances of the same container all map to the internal DNS name, which automatically offers load balancing.

Automated Rollouts And Rollbacks – When your application receives updates, such as new code or configuration, Kubernetes will push out changes to your application while keeping it healthy. If this results in any problems, Kubernetes will also roll back the modifications to heal your application.

Secrets And Configuration Management – You may deploy secret information (usernames and passwords) to your microservice applications without changing the configuration.

Storage Orchestration – Mount supported storage systems (local, cloud, or an NFS volume) automatically for your applications to synchronize the data plane.

Batch Execution – Kubernetes may replace failing containers to provide optimal uptime for your clients.

Learn The Lingo

When you first start using Kubernetes, there are a lot of words to learn. When it comes to Kubernetes, understanding these components will help your team walk the walk and talk the talk.

Master – Because Kubernetes is a distributed orchestration platform, a “head node” that administers your cluster is required. This node is the master.

Node – A node is a single machine (virtual or real) part of your cluster. Nodes carry out activities like executing containers.

Pod – A pod is a group of containers that execute on a single node. They have the same IP address and communicate through localhost.

Service – A Kubernetes abstraction built on top of individual pods. Each service consists of a group of pods that execute the same program on the same port. These pods are abstracted as part of the “service.” The service uses a single IP address to load balance requests to individual containers.

Replication Controller – Determines the number of instances of each pod that should be run on your cluster.

DaemonSet Controller – Runs a given pod on each cluster node.

Deployment – Gives declarative updates for Pods and ReplicaSets (the ReplicationController of the future). You need to define the intended state in a Deployment object, and the Deployment controller will convert the actual state to the desired state for you at a regulated rate.

Also, Take A Look At: